Why the Algorithm Behind Your Practice Test Matters

By BB, Founder of GMAT Club. This post is designed to help you understand better how the GMAT algorithm works. This post was adapted from our upcoming YouTube video extensively covering this topic.

Most GMAT practice tests give you a score at the end. You get a number, maybe a percentile, and a list of questions you got right or wrong. That's useful, but it's also where most practice tests stop.

The official GMAT does a lot more than that. It's built on a statistical framework called Item Response Theory (IRT) that's been refined for over 40 years. And the difference between a practice test that uses this framework and one that doesn't is the difference between a bathroom scale and a full diagnostic workup.

We built our adaptive algorithm at GMAT Club to match the official framework as closely as possible. Not because the science is interesting (though it is), but because it changes what we can tell you about your performance. Here's what that actually means for your prep.

Your score isn't a percentage

On a regular quiz, your score is basically "you got 15 out of 21 right." That tells you something, but not much. Was the test easy? Were those 6 wrong answers all on probability, or spread across everything? Were they hard questions that most people miss, or easy ones that you should have nailed?

On a properly calibrated adaptive test, the scoring algorithm knows more about each question than just whether you got it right. Every question has been statistically analyzed across thousands of test-takers and assigned parameters that describe its difficulty, how well it separates strong test-takers from weak ones, and how susceptible it is to guessing.

When you get a hard, well-calibrated question right, the algorithm learns more about your ability than when you get an easy one right. When you miss a question that's highly resistant to guessing, that tells the algorithm something different than missing one where you could have narrowed it down to two choices.

All of that feeds into your score. Which is why two people who both get 15 out of 21 right can end up with different scores. It's not a bug. The test measured different things about each of them based on which questions they saw and how those questions behaved statistically.

The test adapts to find your ceiling

A linear test gives everyone the same questions. If you're a 90th-percentile test-taker, you'll breeze through 15 easy questions and struggle on 6 hard ones. The test spent most of its time confirming what it already knew (you're good at easy stuff) and very little time measuring what matters (where exactly do you top out?).

An adaptive test doesn't waste your time that way. After a few questions, the algorithm has a rough sense of your ability, and it starts serving questions near that level. If you keep getting them right, it pushes harder. If you start missing, it pulls back. By the middle of the section, most questions are right around your ability level, which is exactly where the measurement is most precise.

This is why a well-built adaptive test can give you a reliable score in 21 questions that would take a linear test 50 or 60 questions to match. It's also why the test can feel relentlessly hard even when you're doing well. If the algorithm is working correctly, you should be getting roughly half your questions right. That's not failure. That's the test homing in on your exact ability level.

What you can learn that a simple quiz can't tell you

This is where the algorithm's complexity starts paying off for your prep, not just your score.

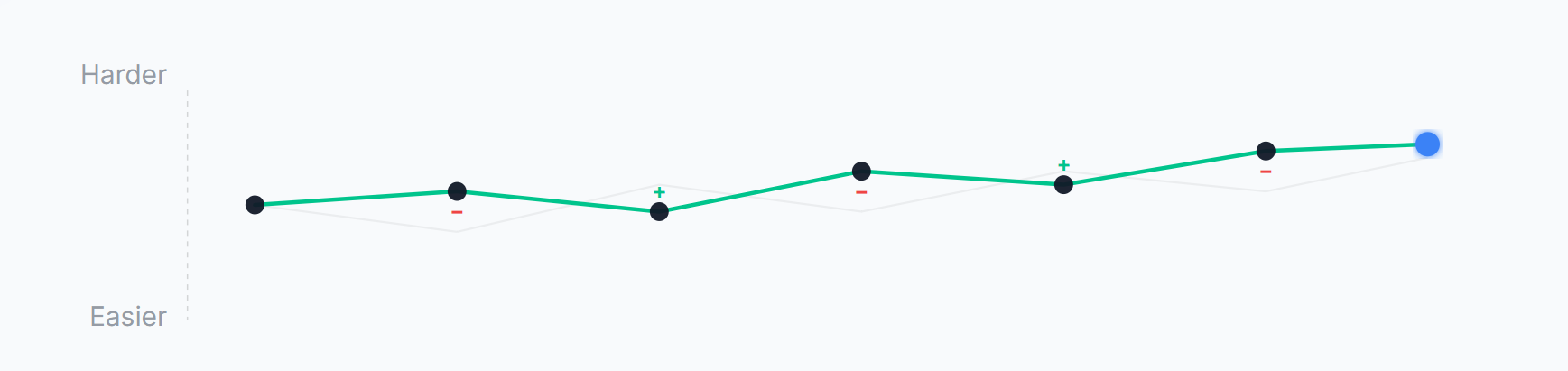

Because we track how the algorithm assessed your ability throughout the section, we can show you an ability estimate curve on your results page. It's a line that shows how the algorithm's opinion of you changed from question 1 to question 21. You can see whether you started strong and faded, whether you warmed up slowly and finished well, or whether your ability was consistent throughout. A linear test can't show you this because it doesn't have an adaptive estimate to track.

Because we know the statistical properties of each question, we can benchmark your time against what other test-takers typically spend on that specific question. Not a generic "2 minutes per question" target, but an actual per-question expected pace. If you spent 4 minutes on a question that most people solve in 90 seconds, that's a different signal than spending 4 minutes on a question that typically takes 3.

And because the adaptive algorithm selects questions based on your performance, the pattern of what you saw tells a story. If the algorithm kept pushing you into Hard territory and you kept answering correctly, that's a stronger signal than getting 100% on a batch of Medium questions. The difficulty of the questions you received is itself a data point about your ability.

Time management changes completely

Here's something that surprised us when we were building the algorithm. Standard adaptive testing theory includes a concept called time budgeting. The idea is that the algorithm considers how much time you have remaining when selecting questions.

Say you have 3 minutes left and 3 questions to go. The algorithm could serve you a question that's the right difficulty but typically takes 3 minutes to solve. You'd barely finish it and have to blindly guess on the last two. Those guesses give the algorithm almost no useful data.

Instead, the system might find a question that's similar in difficulty but is proven to take only a minute on average. Not easier, just faster. By serving that item, the algorithm gives you a real shot at attempting all three remaining questions, which produces a more accurate final score.

This matters for your prep because it means the official GMAT has some tolerance for mild time pressure. It does not have tolerance for running out of time entirely. And this is where a lot of test-takers get into trouble. We looked at our recent test data: out of roughly 60,000 practice tests taken on GMAT Club, only about 11,000 were finished on time. That's about 80% of test-takers running out of time.

The penalty for leaving questions unanswered is severe. The GMAT penalizes blank answers more heavily than wrong answers. A wrong answer at least tells the algorithm something about your ability. A blank tells it nothing, and the system penalizes the absence of information. Always guess if you're running out of time. A random guess has a 20% chance of being right. A blank has a 0% chance.

Why "close to the official test" matters

There are aspects of the GMAT's adaptive algorithm that GMAC keeps confidential. The exact scoring weights, certain parameters of the item selection process, specific exposure control mechanisms. We don't have access to those, and we're not claiming to.

What we have done is implement everything that's publicly known about how IRT-based adaptive testing works: the three-parameter item model, the adaptive item selection, the ability estimation, the time budgeting, and the fairness analysis. We calibrate our questions using real test-taker data. We run the same kind of statistical quality checks that any serious testing organization uses.

The result is a practice test where your score means roughly the same thing as your official score. If you're scoring 685 on our tests, you should be in the neighborhood of 685 on the real thing. Not because we copied the GMAT, but because we built on the same science.

That's the whole point. A practice test that's scored differently from the real test teaches you the wrong lessons. You optimize for the wrong things. You misread your readiness. A practice test that's scored the same way lets you trust the number and focus on actually getting better.

What does this all mean?

You don't need to understand IRT to benefit from it. The algorithm runs in the background and does its job. But knowing a few things about how it works can change how you approach your practice:

If the test feels hard, that might be a good sign. The algorithm is challenging you near your ceiling, which is where the measurement is most precise.

Your score is not a simple count of right and wrong answers. Two people with the same number correct can score differently. That's the system working as intended, not a mistake.

Never leave questions blank. A wrong answer is always better than no answer. The algorithm can work with a wrong answer. It can't work with silence.

Time management is a skill the test is measuring, even if indirectly. The algorithm tries to help by serving time-appropriate questions, but it can't save you from running out entirely.

And when you look at your results page after a practice test, the charts and insights you see aren't decoration. They're outputs of the same statistical engine that computed your score. The ability estimate curve, the pacing analysis, the focus areas - that's the algorithm telling you what it learned about your performance. Use it.

This post is adapted from our upcoming YouTube video "How the GMAT Algorithm Actually Works," which covers the full adaptive testing framework in detail.

Want to see these concepts in action? Try a GMAT Club practice test →

Sign in with Google

Sign in with Google

Sign in with Apple

Sign in with Apple